AI writing detectors are flagging the U.S. Constitution, one of

Americas most significant legal documents, as a piece of

AI-generated text. This intriguing controversy raises many

questions, most importantly: How could a document, written

centuries before the advent of AI, be mistaken as AI-generated?

AI writing detectors, such as ChatGPT, are designed to identify

text produced by AI models. When these detectors analyze the U.S.

Constitution, they often conclude that it was likely written by an

AI. This is, of course, impossible unless James Madison, one of the

key drafters of the Constitution, had access to time travel and

chatGPT. So, what is the reason behind these false positives?

The use of AI writing tools, particularly generative AI like

ChatGPT, has stirred up a whirlwind in the educational sector.

Theres a growing concern that these tools could disrupt traditional

teaching methods, particularly the use of essays to assess a

students understanding of a topic. In response, educators are

turning to AI writing detectors, such as GPTZero, ZeroGPT, and

OpenAIs Text Classifier, in an attempt to maintain the status quo.

However, these tools have proven to be unreliable due to their

propensity for false positives.

For instance, when GPTZero is fed a section of the U.S.

Constitution, it declares that the text is likely to be written

entirely by AI. Similar results have been observed with other AI

detectors, leading to a wave of confusion and humor about the

possibility of the founding fathers being robots. Even religious

texts, like sections from The Bible, have been flagged as

AI-generated.

To comprehend why these tools make such glaring errors, we need

to delve into the underlying principles of AI detection. AI writing

detectors use AI models that have been trained on a vast body of

text, along with a set of rules to determine if the writing is

human or AI-generated. For example, GPTZero uses a neural network

trained on a diverse corpus of human-written and AI-generated text.

It then uses properties like perplexity and burstiness to evaluate

the text.

Perplexity, in the context of machine learning, measures how

much a piece of text deviates from what an AI model has learned

during its training. The idea is that AI models like ChatGPT, when

generating text, will naturally gravitate towards what they know

best, which comes from their training data. The closer the output

is to the training data, the lower the perplexity rating. However,

humans can also write with low perplexity, especially when

imitating a formal style used in law or certain types of academic

writing.

In other words, just because something sounds generic, doesnt

mean it comes from AI, and that seems to be how these programs are

judging things.

This phenomenon underscores the limitations of AI writing

detectors and the need for a more...

We recently took a look at the

top contractor of the Foundation, which is

We recently took a look at the

top contractor of the Foundation, which is

Hoy

lanzamos

Hoy

lanzamos  .

.

The

FBI is hiding information related to its attacks on First Amendment

rights.

The

FBI is hiding information related to its attacks on First Amendment

rights.

.jpg?itok=Gfka5YT_)

Family

members aren't always entitled to unwavering support, but they are

entitled to silence at a bare minimum.

Family

members aren't always entitled to unwavering support, but they are

entitled to silence at a bare minimum. 'These

girls are worried about their selfie and not listening to the

song,' Lambert said as she stopped the show.

'These

girls are worried about their selfie and not listening to the

song,' Lambert said as she stopped the show. John

Kirby defended abortion as a 'sacred obligation' of the military to

'female service members' and 'transgender individuals who

qualify.'

John

Kirby defended abortion as a 'sacred obligation' of the military to

'female service members' and 'transgender individuals who

qualify.'

Oversight

Committee Chair James Comer should corral Republicans before

Wednesday to coordinate the questioning of the whistleblowers so

the country learns the depth of the scandal.

Oversight

Committee Chair James Comer should corral Republicans before

Wednesday to coordinate the questioning of the whistleblowers so

the country learns the depth of the scandal.

Bruce Wilds/AdvancingTime Blog Ever wonder why you have

not been hearing about the penny being...

Bruce Wilds/AdvancingTime Blog Ever wonder why you have

not been hearing about the penny being...

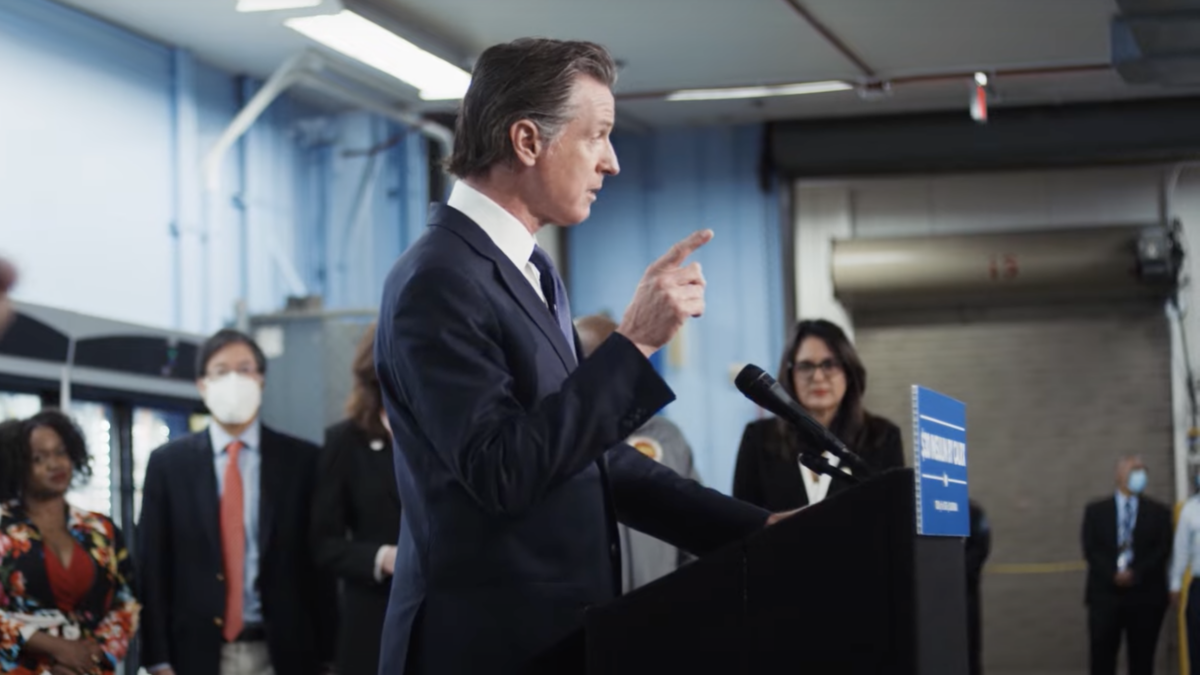

California

Democrats led by Gov. Gavin Newsom are giving the Biden regime a

run for its money in the race to control Americans speech.

California

Democrats led by Gov. Gavin Newsom are giving the Biden regime a

run for its money in the race to control Americans speech. Americans

should be concerned about the safety of their employees and the

sustainability of their business operations in China.

Americans

should be concerned about the safety of their employees and the

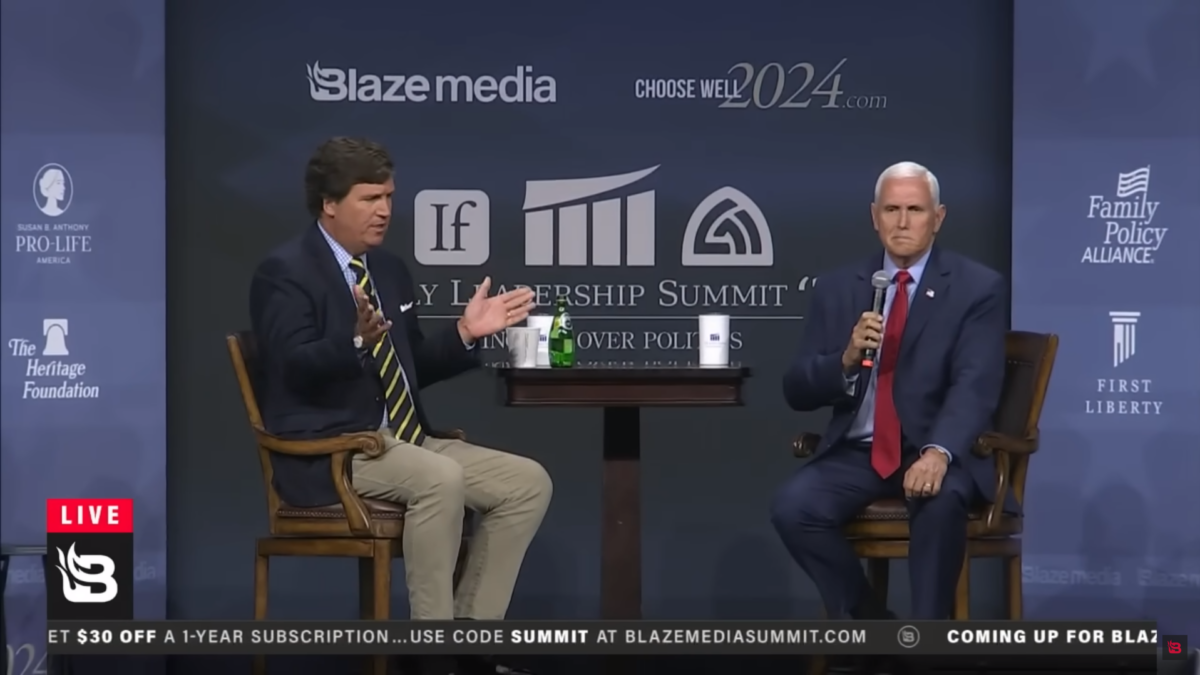

sustainability of their business operations in China. Tucker

Carlson, the New Contras, and their merry band of misfits are doing

the job legacy media refuse to, and they're getting better at

it.

Tucker

Carlson, the New Contras, and their merry band of misfits are doing

the job legacy media refuse to, and they're getting better at

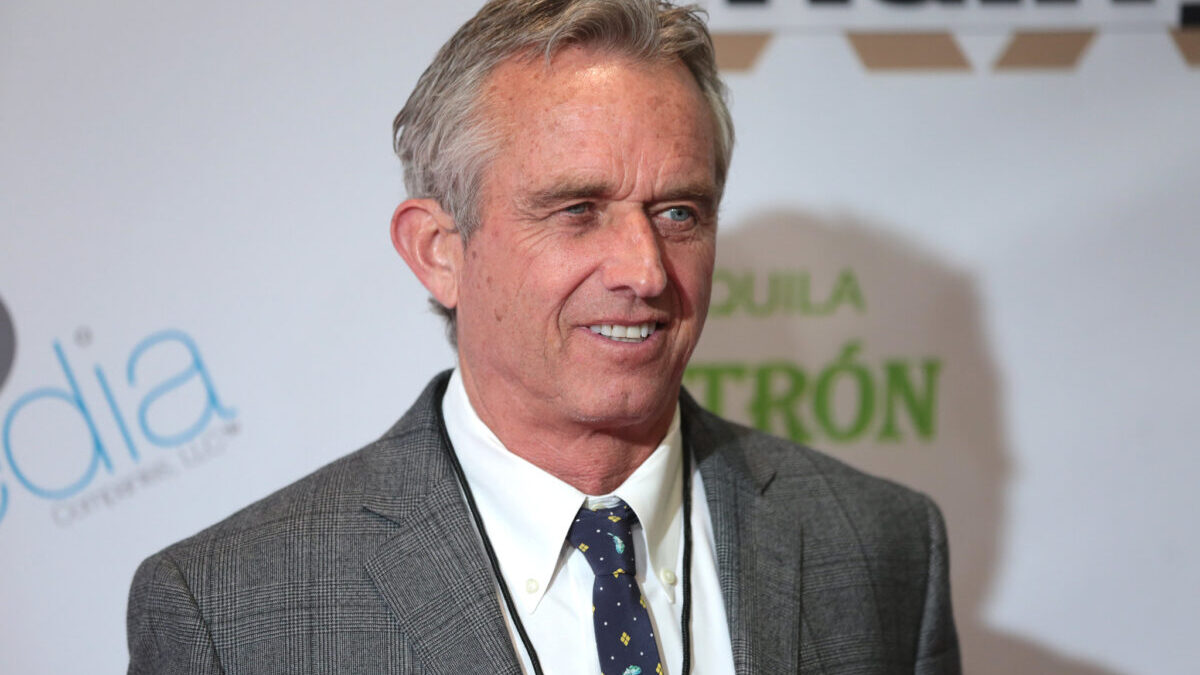

it. Sticking

with Biden prevents open primaries that might demonstrate the

appeal RFK Jr.s ideas have among millions of ordinary voters.

Sticking

with Biden prevents open primaries that might demonstrate the

appeal RFK Jr.s ideas have among millions of ordinary voters. Before

the spread of Christianity, the world was a veritable Epstein

Archipelago.

Before

the spread of Christianity, the world was a veritable Epstein

Archipelago.:focal(1460x973:1461x974)/https:/tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/filer_public/22/f0/22f0c891-cb9e-471a-8e27-41abcf7331df/gettyimages-)